TIRUCHIRAPPALLI, India: The integration of artificial intelligence (AI) into medical imaging can support diagnosis and reduce clinical workload. A new study has tested the performance of two newer AI image recognition models—called transformers—in the automatic detection of common dental conditions on panoramic radiographs. The results highlight their potential to support dentists with faster and more reliable assessments.

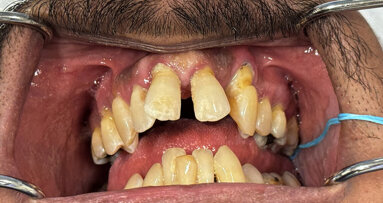

Undertaken by researchers in India, the study sought to determine whether software can reliably sort a panoramic radiograph into a condition category—caries, gingivitis, calculus and hypodontia—based on the overall radiographic pattern. The authors tested two transformer models that process an image differently and compared their diagnostic performance and speed. Their goal was to address limitations of traditional diagnostic methods, including subjectivity, variability between clinicians and difficulty detecting early or subtle lesions.

They trained, validated and tested the models using a dataset of over 5,000 annotated panoramic images sourced from multiple clinical repositories. Their results showed that the best-performing model achieved slightly higher diagnostic performance, reaching around 96% accuracy. The second model delivered comparable accuracy but ran more efficiently—a real-world consideration. Here, accuracy refers to how often the model assigned the correct category to the whole radiograph. Both models were able to classify most radiographs correctly, but performance differed by condition.

How does this relate to AI products already used in clinics?

Tools such as Pearl Second Opinion, VideaHealth Detect AI and Align X-ray Insights support decision-making in that they typically highlight regions of interest for specific findings on radiographs. In contrast, the present study evaluated whether the AI models tested could be used for automated categorisation of radiographs as a whole, not as regions.

Overall, the study concluded that transformer-based systems offer a promising tool for automated diagnosis and have the potential to enhance early detection, reduce diagnostic errors and streamline workflows. Future work will focus on testing with larger and more diverse datasets and refining models to ensure reliability before routine clinical deployment.

The study, titled “A self attention based deep learning framework for accurate and efficient dental disease detection in OPG radiographs”, was published online on 21 January 2026 in Scientific Reports.

Topics:

Tags:

BOSTON, US: VideaHealth and Aspen Dental have completed one of the largest artificial intelligence (AI) deployments seen in dentistry, rolling out AI ...

The use of 3D imaging has become the standard of care for diagnosis and treatment planning for many medical and dental procedures. Such imaging was first ...

DUNDEE, Scotland: Management of irreversible pulpitis in permanent teeth is undergoing a gradual but meaningful shift. Advances in diagnostic understanding ...

Formal complaints are one of the most stressful aspects of clinical dental practice and are widely recognised as a significant source of professional ...

Live webinar

Tue. 14 April 2026

8:00 pm EST (New York)

Dr. Bruce McFarlane Certified Specialist in Orthodontics Fellow: Royal College of Dentists of Canada Diplomate: American Board of Orthodontics

Live webinar

Wed. 15 April 2026

2:00 pm EST (New York)

Live webinar

Wed. 15 April 2026

8:00 pm EST (New York)

Linda Hecker MS, BSDH, RDH

Live webinar

Thu. 16 April 2026

12:00 pm EST (New York)

Dr. Pär-Olov Östman, Dr. Robert Gottlander DDS

Live webinar

Fri. 17 April 2026

12:00 pm EST (New York)

Live webinar

Fri. 17 April 2026

1:00 pm EST (New York)

Dr. Stuart Yeaton BDentSc, MOrth

Live webinar

Mon. 20 April 2026

1:00 pm EST (New York)

Dr. Alberto Monje DDS, MS, PhD

Austria / Österreich

Austria / Österreich

Bosnia and Herzegovina / Босна и Херцеговина

Bosnia and Herzegovina / Босна и Херцеговина

Bulgaria / България

Bulgaria / България

Croatia / Hrvatska

Croatia / Hrvatska

Czech Republic & Slovakia / Česká republika & Slovensko

Czech Republic & Slovakia / Česká republika & Slovensko

France / France

France / France

Germany / Deutschland

Germany / Deutschland

Greece / ΕΛΛΑΔΑ

Greece / ΕΛΛΑΔΑ

Hungary / Hungary

Hungary / Hungary

Italy / Italia

Italy / Italia

Netherlands / Nederland

Netherlands / Nederland

Nordic / Nordic

Nordic / Nordic

Poland / Polska

Poland / Polska

Portugal / Portugal

Portugal / Portugal

Romania & Moldova / România & Moldova

Romania & Moldova / România & Moldova

Slovenia / Slovenija

Slovenia / Slovenija

Serbia & Montenegro / Србија и Црна Гора

Serbia & Montenegro / Србија и Црна Гора

Spain / España

Spain / España

Switzerland / Schweiz

Switzerland / Schweiz

Turkey / Türkiye

Turkey / Türkiye

UK & Ireland / UK & Ireland

UK & Ireland / UK & Ireland

Brazil / Brasil

Brazil / Brasil

Canada / Canada

Canada / Canada

Latin America / Latinoamérica

Latin America / Latinoamérica

USA / USA

USA / USA

China / 中国

China / 中国

India / भारत गणराज्य

India / भारत गणराज्य

Pakistan / Pākistān

Pakistan / Pākistān

Vietnam / Việt Nam

Vietnam / Việt Nam

ASEAN / ASEAN

ASEAN / ASEAN

Israel / מְדִינַת יִשְׂרָאֵל

Israel / מְדִינַת יִשְׂרָאֵל

Algeria, Morocco & Tunisia / الجزائر والمغرب وتونس

Algeria, Morocco & Tunisia / الجزائر والمغرب وتونس

Middle East / Middle East

Middle East / Middle East

To post a reply please login or register